Amazon Web Services (AWS) and OpenAI have entered into a landmark multi-year strategic partnership that will redefine the infrastructure powering frontier artificial intelligence (AI). The collaboration grants OpenAI large-scale access to AWS’s world-class compute infrastructure, allowing it to train and deploy the most advanced AI models ever built.

This alliance represents one of the largest technology infrastructure commitments in history, with AWS dedicating an estimated 38 billion USD in compute capacity, hardware resources, and cloud services to support OpenAI’s ambitious roadmap. The partnership will provide immediate access to AWS’s GPU and CPU clusters, scaling into millions of compute cores by 2027.

A Partnership Built for the Frontier of AI

The partnership brings together two of the most influential organizations in the modern AI landscape. AWS, long recognized as a leader in cloud infrastructure and enterprise technology, will supply the computing power, networking, and data-management capabilities needed to train massive frontier models. OpenAI, the creator of breakthrough technologies such as ChatGPT, DALL·E, and Codex, will leverage this infrastructure to accelerate research, development, and deployment of next-generation AI systems.

Both companies describe this as more than just a technology agreement — it is a shared commitment to scaling AI safely, efficiently, and sustainably. The collaboration focuses on providing OpenAI with the flexibility to deploy workloads on a platform that can deliver reliable performance at previously unimaginable scales.

Building the World’s Most Advanced AI Infrastructure

Under the agreement, AWS will make its most powerful compute environment available to OpenAI. This includes clusters built on Amazon EC2 UltraServers equipped with NVIDIA’s latest GB200 and GB300 GPUs, optimized specifically for large-scale AI training and inference. The infrastructure is engineered for low-latency interconnects, high-bandwidth data transfer, and near-zero downtime — critical requirements for training frontier-sized models.

In addition to GPUs, OpenAI will gain access to tens of millions of CPUs within AWS’s elastic compute infrastructure. This hybrid configuration allows workloads to scale dynamically across both GPU- and CPU-based systems, balancing training, inference, and deployment needs efficiently. AWS’s deep expertise in managing hyperscale clusters ensures that OpenAI’s compute needs can grow rapidly without sacrificing reliability.

The initial phase of deployment begins immediately, with full capacity expected to be operational by the end of 2026. The agreement also includes provisions for additional capacity expansion through 2027 and beyond as AI models continue to increase in size and complexity.

Transforming AI Compute Strategy

This partnership signals a major evolution in how AI infrastructure is sourced and managed. In recent years, as the scale of AI models has grown exponentially, compute resources have emerged as the single greatest limiting factor in innovation. The AWS–OpenAI collaboration directly addresses that challenge, ensuring that OpenAI can train and deploy the next generation of multimodal and reasoning-capable AI systems without bottlenecks.

It also highlights a strategic shift in the AI ecosystem — toward diversified, multi-cloud infrastructure strategies. Rather than relying on a single provider, OpenAI is expanding its partnerships to ensure reliability, cost-efficiency, and resilience. For AWS, the collaboration reinforces its leadership as a global provider capable of handling the world’s most demanding AI workloads.

Delivering Performance at Scale

AWS’s infrastructure is built to handle the extreme demands of AI model training. Its EC2 UltraServers are designed for energy efficiency, dense compute power, and fast data-movement capabilities. The system architecture allows GPUs to communicate through ultra-low-latency, high-bandwidth links — a critical factor in training models that require trillions of parameters and vast datasets.

The compute clusters will be connected by AWS’s high-speed networking fabric, ensuring rapid synchronization across thousands of GPUs. Storage systems are optimized for petabyte-scale data handling, while AWS’s control plane technologies will allow OpenAI to orchestrate workloads seamlessly across multiple regions.

Together, these systems make up what AWS describes as an “AI-ready supercloud” — capable of supporting not just large-language-model training but also real-time inference, robotics, multimodal AI, and agentic systems that can reason and plan over long time horizons.

Leadership Perspectives

Jassy, CEO of Amazon, emphasized that the collaboration is about more than raw compute. It represents a shared vision for how AI can transform industries, from healthcare and finance to manufacturing and education. “AWS has spent years building the most flexible and secure cloud infrastructure in the world,” he said. “By working with OpenAI, we’re ensuring that the next wave of AI innovation is powered by infrastructure that can scale with it — responsibly, efficiently, and sustainably.”

OpenAI CEO Sam Altman expressed similar enthusiasm, noting that scaling frontier AI models requires both compute power and reliability at a global scale. “The partnership with AWS gives us access to a massive, dependable platform that will allow us to continue pushing the boundaries of what AI can do,” he said. “Our goal has always been to make advanced AI broadly useful and accessible — this agreement helps make that possible.”

Impact Across the AI Ecosystem

The implications of this partnership extend far beyond the two companies involved. For AI developers and researchers, it sets a new benchmark for how large-scale model training can be conducted in a secure, cloud-native environment. For enterprises and consumers, it promises faster access to more capable AI systems — with improved performance, reduced latency, and greater stability.

As OpenAI continues to expand its product lineup — from ChatGPT to enterprise APIs and creative tools — these services will benefit from the expanded compute capacity and reliability provided by AWS. Businesses that integrate OpenAI’s models through AWS infrastructure can expect faster innovation cycles, improved scalability, and enhanced global availability.

At a macro level, the collaboration underscores the growing importance of AI infrastructure as a differentiator in the technology industry. Cloud providers are no longer competing solely on storage or compute pricing, but on their ability to deliver tightly integrated, AI-optimized platforms that can power the world’s most complex workloads.

Driving Responsible and Sustainable AI Growth

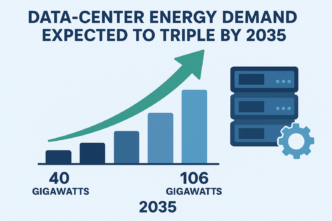

Both companies have emphasized that sustainability will be a core focus of the partnership. AWS’s data centers increasingly use renewable energy and energy-efficient cooling systems, helping to offset the environmental impact of large-scale AI training. OpenAI and AWS have committed to exploring innovations in energy efficiency, chip utilization, and workload scheduling to reduce carbon intensity over time.

This focus aligns with a broader industry trend: as AI scales, so too must efforts to ensure its growth is sustainable. By combining OpenAI’s expertise in model optimization with AWS’s advances in green computing, the two companies aim to build a framework for responsible, efficient AI infrastructure at unprecedented scale.

Looking Ahead

The AWS–OpenAI partnership represents a pivotal moment in the evolution of artificial intelligence. It blends OpenAI’s groundbreaking research and software innovation with AWS’s unmatched hardware, cloud, and operational capabilities. Together, they are laying the foundation for the next era of AI — one defined by scale, capability, and accessibility.

As this collaboration unfolds over the coming years, it will likely shape the trajectory of global AI development. From new model architectures to real-time reasoning systems and generative tools that understand and interact with the world, the compute made possible through AWS will empower OpenAI to continue advancing the frontier of intelligence.

In short, this partnership is not merely about servers and GPUs — it’s about building the digital backbone of the AI revolution. It’s about ensuring that as artificial intelligence evolves, the world has the infrastructure to keep pace. And it’s about setting a new standard for what global technology collaboration can achieve.